For many of you who are SERP watchers (search engines result pages) involved in SEO, you may have noticed a cache relapse (showing results from weeks or months in the past) the last few days. Aside from seeing titles shifting about and new pages appearing in the SERPs, what does this mean to you?

The following views are just speculations based on personal observation. Going back to the weekend index and search engine redundancy whenever new algorithms are blended into the mix, the results are tested and measured to see if they produced the desired impact. Just think of it as beta testing a new prototype on the track by taking it for a few laps. If the prototype lives up to what was expected, it gets a label as a new rule for relevancy.

Here are a few possible scenarios, like cycles, search engines ebb and flow switching their emphasis from a very specific niche of keywords to opening the floodgates every few weeks to let the semantic synonyms and stemmed keywords breath. So, if you notice that your keywords relapse from time to time (or your traffic tapers off and runs like a spicket at other times of the month), this phenomenon can also be the likely culprit for the backlash.

Let’s assume for the sake of continuity that a new rule / weighting factor is introduced to the way spiders cache pages. If a new benchmark were injected into the algorithm (for example pages with less than 3 inbound links are deindexed), the results for those pages that no longer matched the ideal imprint or threshold would change the weighting formula of ranking precedence throughout the SERPs. Then, as a chain reaction (of the vacuum) new pages rise in relevance to take up the slack produced by less pages competing for specific queries (which is something you may have noticed over the weekend).

We know that search results you see know are not live, in fact they are like snapshots taken from aggregate data centers that fluctuate from time to time. Then based on the component that make it to the next round of redundancy, tweaks and changes are made until the right blend is achieved.

Do we ever know what that blend is, no, but everything leaves a trail and based on cause and effect, observing the ripples does allow you to see in between the functions from time to time. Another observation of this cache relapse is that external links seem to be making a comeback as one of the main contributors to rankings.

The Google algorithm had been dampened in my summation for the past 120 days when the shift from external backlinks was shifted to more of a 70% internal link 30% external link balance. Now, the formula for most new pages making their debut is 65-70% backlinks (and some new method for ascertaining relevance) and 30-35% on page.

What does this mean to you and I as SEO’s? That if you neglected link building from relevant authority links, you are probably experiencing some major dips for your mid range keywords, while your more competitive themed keywords stay intact. I have also observed an implosion of page rank where page rank is moving deeper into a site to connect the dots (multiple sub folders sprouting page rank).

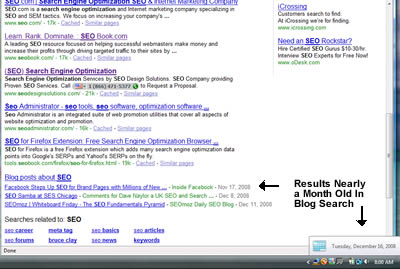

Weeks ago, I noticed old material resurfacing from deep crawling through what could have been deemed the mother of all deep crawls from Google. Particularly since material that was months old was appearing as newly discovered content according to Google Blog Search. At first, it made little sense and I simply wrote a piece about it in November, but now you can also see the significance of its debut.

Did Google Rebuild their Index Right Under Our Nose?

It would appear so (from the top down) and the shakeout was done through deindexing pages that didn’t make the cut. Also noted from SEO Roundtable. What does it mean to sites that were clipped in mid cycle? time to find new platforms for relevance and appease the spiders again.

Sitewide links seem to work well again (things like blogrolls) as long as they are not done in excess. Many newer sites we pointed some proprietary tools at for analysis revealed some rather alarming insights that I thought would never fly according to Google. For example a 4 page website offering SEO services with 170,000 backlinks (which nearly breached the top 10).

Sorry, but there is nothing natural about a 4 page site with that many links. Not to out anyone here, but getting a few authority links sitewide and then mixing 40-50 mid level domains in to make it look natural doesn’t quite cut it. However in any case, it nearly worked for them (or it will until the facet of the algorithm is adjusted that controls page weight again).

This is the very thing that makes SEO exciting, the fact that you have to pay attention to viable shifts in the SERPs, defend your positions in order to keep them relevant and cycling through the various checks and balances as well as always be on the alert for new insights.

Organic optimization may not be rocket science, but it does have very distinct patterns that are discernible over time. I look forward to feedback from others to see if they have noticed any other anomalies in Google worth noting?

Is Google doing a dance?

I really enjoying coming back here to read your stuff. Its pure gold. Thank You.

Thanks for the comments to you both. @Danny:

Would not necessarily call it a dance, but more like a shakedown where who link to you, and who links to them and who also links to you and them is being calculated to see which links are passing the real juice. Volatility of links is something you know I am against, it is better to use internal links (which have more stability) and focus on aging quality content instead to stay buoyant.

@Don:

I appreciate your comments and am glad that you enjoyed the information / theory presented. Thanks for visiting.

Hi Jeffrey.

tsk, months I’m watching this,I have one weekend off and it all goes on without me..

v curious about the 4 page site with 170k too. :)

definitely concur sitewides are back. dont actually think they ever left.

Hey Kev:

Ironically enough after the index settled, that company with the 4 page site went back to page 6 where they belong. I had to bring back the concept of the weekend index yet again, good catch on that one, how are things?

things are good my man, and you?

seeing some signs of serp twitchiness today as well, especially on .co.uk and on woopra, lot of people searching filters and algo update queries, usually a good sign of jumpy serps

noticed anything?

I concur, matter of fact, one of our blogs sprouted page rank today, so there may have been a Google Page Rank Update. On the SERP front, we fell one position for SEO today, which I thought would never happen due to the ranking factors and momentum. Probably not a big deal, but I will keep my eyes peeled and probably write something about it to follow up. Take care Kev and Happy Holidays.