Google Analytics and Google Webmaster Tools are a storehouse of data which can be used for search engine optimization. However, when combined with a few other stellar SEO tools creates a winning combination for data-mining your own historical benchmarks to facilitate tangible KPI’s (key performance indicators) and ROI thresholds for future keyword and conversion conquests.

Just as the statement above postulates, it’s not the SEO Tools you use, it’s HOW you use them that counts. The key is to know where to look and why, then armed with that data you know what to change and how to create optimal pages for search.

Using the techniques elaborated in this post, you can determine the health of a web page from the standpoint of SEO and whether or not it needs more attention, content or links to provide present and future-tense long-term value.

The data set is based on present-tense equity, collective link and anchor text metrics, SERP position and where the pages long-term value is based on historical data. The premise of Google SEO is when you give the search engine the cues is needs, it rewards your website with traffic. So, what is the best method to determine if a page is pulling its own weight from the standpoint of SEO or what is the keyword / traffic lifetime value of a page? Considerations are:

- How many impressions and clicks per day the page gets?

- How many clicks per month a page gets?

- How many keywords drive traffic to the page?

- How integral for conversion is it to your website?

- How many internal links does that page have?

- What anchor text is predominant?

- How many external inbound links does the page have?

- What inbound anchors are predominant?

- How many variations between the internal and external links are there and is the page themed appropriately.

Adding another layer such as using historical data to assess performance is critical in putting the data above to work on your behalf. For example: By using the questions above and some SEO common sense I would initially assess:

- How many inbound links are present

- the anchor text

- if the links are from related pages

- if there is anchor text diversity

- if the page ranks for more than one variation of a keyword

Also, you could look at past performance such as:

- How many links does the page have?

- Are they relevant?

- Were they acquired naturally or intentionally?

- Does the page have long term value or is it a hub page to build other relevant links to pages with more significance? (Effectively becoming a child page rather than a parent theme or landing page).

If a page in your website does rank for various synonyms it is often because of the array of links linking to it which have a diverse array of related anchor text.

For instance, if your target page is about computers, and I link to it with the word computers, processors, mother boards, etc. and the page links out to other pages on processors, mother boards, and processors – then the target page and the page it links to can gain buoyancy in search engines.

On the contrary, if the page is consistently getting linked to with one keyword and not multiple keywords or key phrases, the likelihood of that page ranking for multiple variations diminishes. Another tactic is to house all of the various keywords on a landing page and then link to it with multiple anchors (both from within the site and deep linking from other sites).

The culmination of this type of link osmosis acting as a catalyst can transform the page into a catch-all for all semantically themed overlapping keywords and key phrases represented in those links (so long as the on-page elements have similar semantic nodes braided into the meta title, description or on page content).

The real value of SEO is not to just merely rank for one keyword, but on the contrary to rank for multiple keywords to with such gravity that it would be hard NOT to show up, when someone typed in a keyword related to your target root phrase or any of it contextual deviations. This goes back to the point about convergence of on page (keyword prominence and proximity) as well as if the page is a one hit wonder or power-house catch-all sales funnel. Both to a great extent are within your control based upon.

- Link velocity

- Link diversity

- On page internal links (volume of internal links) and

- Volume of keyword variations

Ironically, with a few minutes, you can glean all of this from Google Analytics and Webmaster tools. Start by:

- Logging in to Google Analytics to View Traffic Sources> Select Keyword > Set the date from 1 year previous to present. – then export that data.

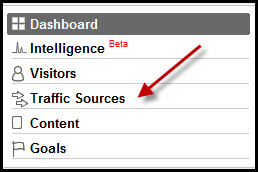

Log in to Google Analytics and "View Traffic Sources".

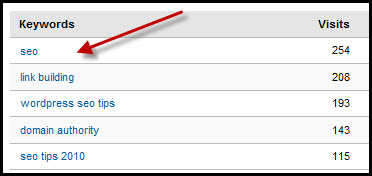

Select the Keyword for Analysis

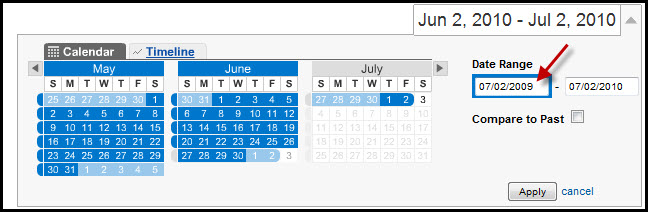

Change the date range to 1 year prior to view historical data then export...

*This allows you to assess historical trends and assess page authority based upon the frequency of traffic and spread of keywords.

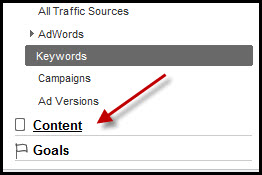

- Then look at the Page Level Analysis by Selecting Content > Setting the date from 1 year previous to present, then clicking Entrance Keywords> then export that data.

Select the Content Tab> Select 1 year to previous, then > Entrance Keywords (to assess the total and historical spread of keywords per page).

Now you have a running tab of the keyword you wish to inspect and the page that corresponds to it and the total number of historical “effective” keywords ALSO driving traffic to THAT page. All that is next is to (1) use Google Webmaster Tools to look at the volume of internal links and a backlink checker to assess the number of deep links and the corresponding anchor text to that page.

Or on the contrary, you could always use Xenu Link Sleuth (to assess on page internal links, outbound links, title tags, URL parameters and broken links) and Majestic SEO for a more robust data set to triangulate inbound anchor text data for accuracy.

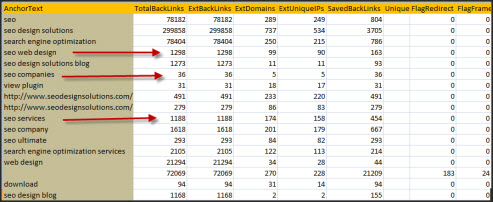

Sample Set of Majestic SEO Anchor Text Report

Taken a step further, you can also use one of my favorite tools SEM Rush to map out the corresponding keyword to landing page and SERP position (which you can also use in tandem with a search engine result page scrub to assess where those pages are to determine click through rate to traffic, etc).

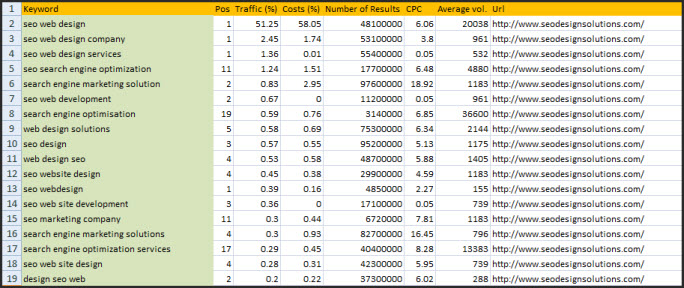

SEM Rush SERP | Keyword | Landing Page Data

We firmly believe in using 2-3 tools for a given metric before jumping to premature conclusions. This ensures that the metrics are not retroactively pigeonholed into an assumption based on an involuntary blind-spot, egocentric viewpoint or theory, but on the but are validated by various layers of input, analysis and the footprint they leave behind. With that data you can determine:

- What is the threshold of how important that page is to your website

- If it performs or needs tweaking

- If the page has gained any more trust or authority or has become stagnant and is losing relevance and

- What are the off-page factors impacting the authority of that page?

You can set the date range to a month, three months, 6 months or a year to assess the pages past history and ranking value / importance and if you see a pattern that is favorable or not so favorable, you can address where that page or keyword fits into your overall optimization campaign.

Each page represents an opportunity for a short-term boost and long-term strategic advantage if leveraged properly. Leaving old pages dangling and starving from link attrition does not benefit your long-term objective. Look for pages lacking links, traffic and consider 301 redirects to more prominent pages or consider reviving them with some deep links (from fresh pages) or using them for a drip down link building campaign to strengthen the weakest link in your website.

Of all the metrics Google assesses, on page trust is the longest and most difficult to achieve. Why throw that away when you can go back to legacy content (for pages that have stemmed into multiple rankings). Revise that content or redirect it to a brand new (relevant) landing page, to leverage the pent up ranking factors present in your domain.

Before you randomly build links or consider building more pages in your website, take a look at the historical long-term performers and you might be surprised that the sleepers you revive can bring new life, fresh traffic and more sales and conversions with less effort, time and energy than you might imagine.

The exercise and the tools we used have implications reaching far beyond this simple notion. The truth being, its not the tools, but the one wielding them and the approach that determines the extent of their function. Stay Tuned for Upcoming Posts from the SEO Design Solutions Blog or Subscribe to our RSS feed for additional SEO Tips, Tactics and Techniques to Define your Website in Search Engines.

Hi, I am from India. I could not stop till I finish reading this post. It gives me a new dimension of thinking on SEO of my page. I have few blogs ranking in top3 in google and hope after applying your theory I will be on no#1.

@Animesh:

Those last two positions are the most difficult to conquer, and the first position gets twice as much traffic as the first position and the third less than the second.

Getting from top 3 to #1 means (link diversity, staggered link velocity and trust)but a few power house links never hurt (or a link from the strongest link on the site above you either).

What if your in a situation where Analytics was not previously installed?

How much do you like SEOMoz’s tools?

1st response – get http://www.clicktale.com installed ASAP! and start tracking.

2nd response – They are an effective point of reference when cross compiling data I still favor http://www.majesticseo.com for link analysis (it really maps the history of a domains link graph/footprint), I don’t think Linkscape has the range of MJSEO. I still use the moz toolbar for a quick glance at useful metrics.

I can see that you are an expert at your field! I am launching a website soon, and your information will be very useful for me.. Thanks for all your help and wishing you all the success.

The content on your website needs to be fresh, interesting and updated regularly so your human readers and the search engine spiders will come back. The easiest way to ensure that your site gets new content is to have an area on your site for a blog or news section. Then get members of your organization including the CEO to post new and interesting articles.

Google SEO